Research - Sign Language Recognition

| Automatic Sign Language Recognition (ASLR) |

Developing sign language applications for deaf people can be very important, as many of them, being not able to speak a language, are also not able to read or write a spoken language. Ideally, a translation systems would make it possible to communicate with deaf people. Compared to speech commands, hand gestures are advantageous in noisy environments, in situations where speech commands would be disturbing, as well as for communicating quantitative information and spatial relationships.

A gesture is a form of non-verbal communication made with a part of the body and used instead of verbal communication (or in combination with it). Most people use gestures and body language in addition to words when they speak. A sign language is a language which uses gestures instead of sound to convey meaning combining hand-shapes, orientation and movement of the hands, arms or body, facial expressions and lip-patterns. Contrary to popular belief, sign language is not international. As with spoken languages, these vary from region to region. They are not completely based on the spoken language in the country of origin.

Sign language is a visual language and consists of 3 major components:

- finger-spelling: used to spell words letter by letter

- word level sign vocabulary: used for the majority of communication

- non-manual features: facial expressions and tongue, mouth and body position

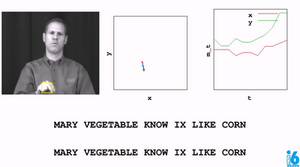

Similar to automatic speech recognition (ASR), we focus in automatic sign language recognition (ASLR) on automatically recognizing sign language videos as gloses, which can be later translated by a statistical machine transaltion system into written text (see a demo video).

|

| Benchmark Databases for Sign Language Recognition and Translation |

Automatic sign language recognition databases used at our institute:

- download - RWTH German Fingerspelling Database: German sign language, fingerspelling, 1400 utterances, 35 dynamic gestures, 20 speakers

- on request - RWTH-PHOENIX Weather Forecast: German sign language database, 95 German weather forecast records, 1353 sentences, 1225 signs, fully annotated, 11 speakers

- download - RWTH-BOSTON-50: American sign language database, 483 utterances, 50 isolated signs, 83 pronunciations, 3 speakers

- download - RWTH-BOSTON-104: American sign language database, 201 sentences, 104 signs, continuous sign language, 3 speakers, with annotated hand and head groundtruth positions for about 15k frames to evaluate tracking algorithms

- on request - RWTH-BOSTON-400: American sign language database, 843 sentences, about 400 signs, continuous sign language, 5 speakers

- download - RWTH-BOSTON-Hands: hand tracking database, 1000 frames with annotated hand positions to evaluate hand tracking algorithms - included in the RWTH-BOSTON-104 database

- on request - ATIS Corpus: Irish sign language database, 680 sentences, about 400 signs, continuous sign language, several speakers, with annotated hand and head positions for about 5.5k frames to evaluate hand tracking algorithms

- external - Corpus NGT: An online corpus of video data from Sign Language of the Netherlands with annotations

- external - BSL Corpus Project

If you are interested in using these sign language recognition databases too, please contact Philippe Dreuw.

Some survey papers related to corpora to be used for tracking and recognition benchmarks in sign language recognition:

-

P. Dreuw, J. Forster, and H. Ney. Tracking Benchmark Databases for Video-Based Sign Language Recognition. In ECCV International Workshop on Sign, Gesture, and Activity (SGA), pages 286-297, Crete, Greece, September 2010.

-

P. Dreuw, C. Neidle, V. Athitsos, S. Sclaroff, and H. Ney. Benchmark Databases for Video-Based Automatic Sign Language Recognition. In Language Resources and Evaluation (LREC), pages 1-6, Marrakech, Morocco, May 2008.

-

P. Dreuw, D. Rybach, T. Deselaers, M. Zahedi, and H. Ney. Speech Recognition Techniques for a Sign Language Recognition System. In Interspeech, pages 2513-2516, Antwerp, Belgium, August 2007.

ISCA best student paper award Interspeech 2007.

More publications related to sign language recognition and translation

Philippe Dreuw Last modified: 2011-04-07 10:32:01 . Disclaimer. Created Wed Dec 22 18:04:32 CET 2004